Small Language Models – Why Smaller AI Is the Smartest Move in 2026

For years, the AI race had one rule: bigger is better. More parameters, more data, more computing power. The giant wins.

In 2026, that rule is being rewritten. The most exciting trend in AI right now isn’t a trillion-parameter monster, it’s the rise of Small Language Models (SLMs). Compact, fast, private, and surprisingly powerful.

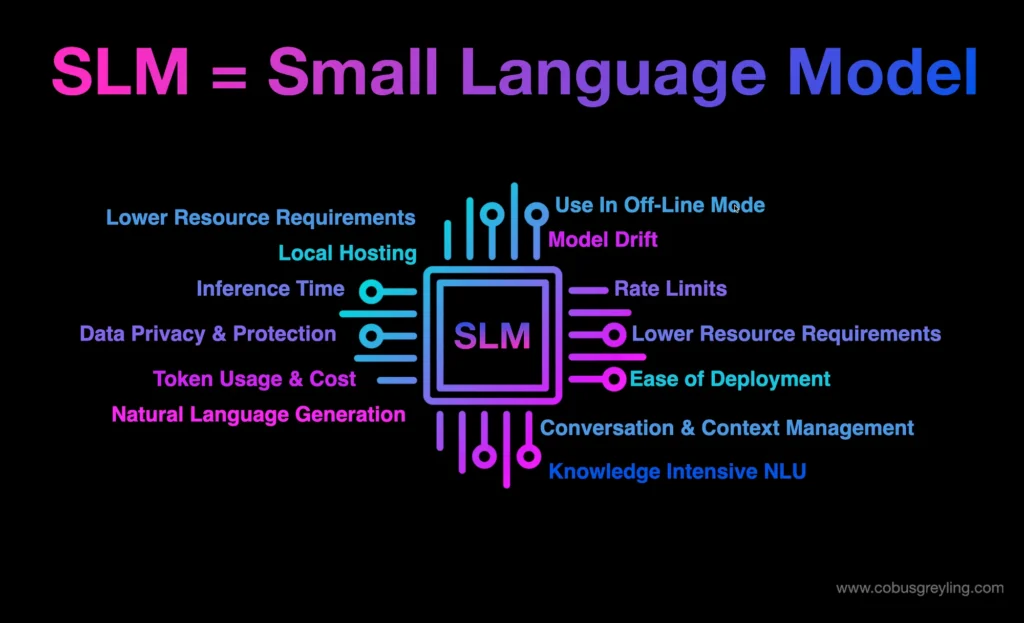

So, What Exactly Are Small Language Models?

Large Language Models (LLMs) like GPT-4 run on over 1 trillion parameters and require massive cloud infrastructure to operate. They’re powerful but expensive, slow for real-time use, and raise serious data privacy concerns since your data leaves your device.

Small Language Models are AI models with fewer than 10 billion parameters, think of them as the efficient, specialized sibling of the giant LLMs. Models like Microsoft’s Phi-4 Mini (3.8B parameters), Meta’s LLaMA 3.2 (3B), Google’s Gemma, and Mistral 7B can run directly on your laptop, phone, or on-premise server — no cloud required.

Think of it this way: LLMs are like hiring a world-renowned generalist consultant who charges a fortune and needs a whole office to work. SLMs are like having a highly trained specialist who works right at your desk, instantly, for a fraction of the cost.

Why Is It Exploding Right Now?

The shift toward SLMs in 2026 is being driven by very real, practical needs:

- Microsoft’s Phi-4 Mini (3.8B parameters) matches or beats models in the 7B–9B range on reasoning tasks, at a fraction of the compute cost

- High-end smartphones are now shipping with built-in 1B–3B parameter models handling photo editing, notification summaries, and voice commands entirely offline

- Fine-tuned SLMs are handling 75% of customer support tickets with higher accuracy than general LLMs — because they’re trained only on company-specific data

- Development teams run Llama 3.2 locally for code completion, ensuring proprietary code never leaves the building

- A healthcare provider uses Phi-3 Mini to process thousands of medical records per hour, fully HIPAA-compliant and on-premise, something impossible with cloud-based LLMs

Real-World Applications You’ll See Everywhere

SLMs are quietly powering some of the most practical AI deployments of 2026:

- Customer Support: Domain-specific SLMs outperform giant LLMs because they’re trained on your exact product and policies

- On-Device AI: Your phone’s AI features — smart replies, photo descriptions, voice recognition — are increasingly powered by SLMs running locally

- Healthcare & Legal: Sensitive industries use SLMs on private servers to process confidential data without any cloud exposure

- Coding Assistants: Developers run SLMs inside their IDE for instant code suggestions without sending proprietary code to external APIs

- Edge Computing: SLMs power real-time AI in places where internet is unreliable — factories, remote locations, embedded devices

What This Means for You

The future of AI isn’t just in the cloud-hosted giants. It’s on your device, in your company’s server, tailored to your specific domain, fast, private, and affordable.

SLMs prove that in AI, intelligence isn’t just about scale. It’s about the right model, in the right place, for the right task. The smartest AI strategy in 2026 might just be thinking smaller.

References:

Ahmad, S. (2026, February 24). Small language models (SLMs): The smart choice for 2026 AI deployments. LinkedIn. https://www.linkedin.com/pulse/small-language-models-slms-smart-choice-2026-ai-suleiman-ahmad-qo3tf

Machine Learning Mastery. (2026, February 23). Introduction to small language models: The complete guide for 2026. https://machinelearningmastery.com/introduction-to-small-language-models-the-complete-guide-for-2026/