Multimodal AI – When AI Finally Got Eyes, Ears, and a Voice

Remember when AI was just a chatbot you typed questions into? Those days are officially over.

We are living through one of the most exciting shifts in artificial intelligence , the rise of Multimodal AI. And if you think this is just another buzzword, think again. Multimodal AI is quietly becoming the backbone of how we interact with machines in 2026.

So, What Exactly Is Multimodal AI?

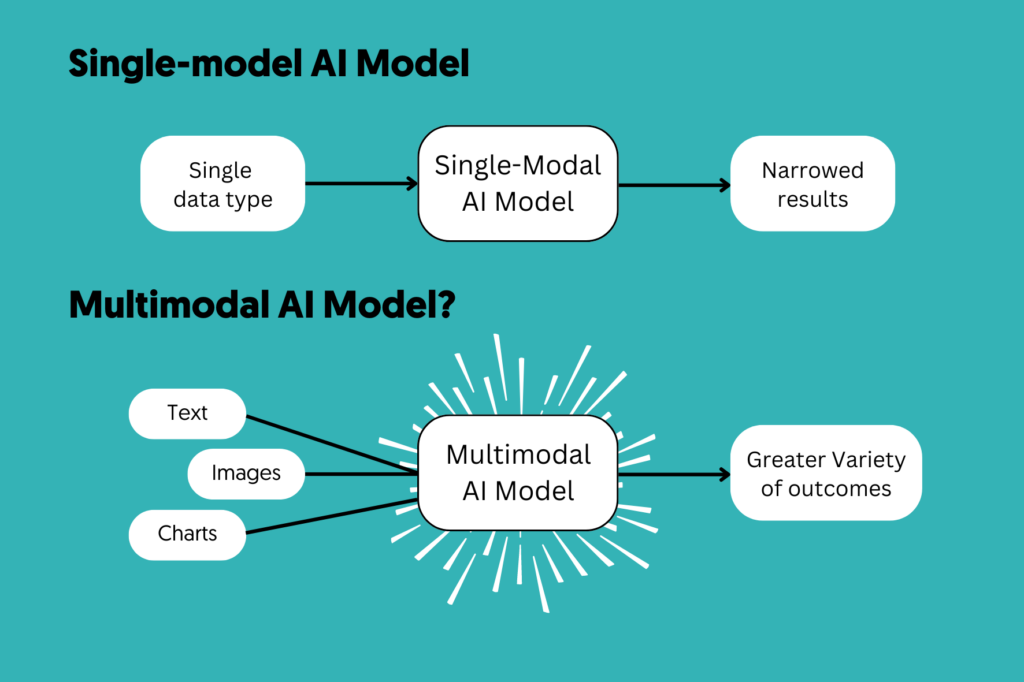

Traditional AI models were built around a single type of input usually text. You typed, it responded. Simple, but limited.

Multimodal AI breaks that boundary. These models can simultaneously process and generate text, images, audio, and video, just like a human does naturally. Show it a photo, it understands it. Play it an audio clip, it transcribes and analyzes it. Give it a video, it summarizes the narrative. It’s AI that perceives the world through multiple “senses” at once.

Think of it this way: earlier AI was like talking to someone on a phone call, text only. Multimodal AI is like sitting across from someone in a room, full sensory engagement.

Why Is It Exploding Right Now?

The momentum behind multimodal AI in 2026 is undeniable. Here’s what’s driving it:

- GPT-4o, Gemini 1.5, and Claude 3 have made multimodal capability the new baseline standard not a premium feature

- Disney invested $1 billion into OpenAI specifically to leverage multimodal tools like Sora, enabling users to generate clips featuring Marvel, Pixar, and Star Wars characters

- ByteDance’s Seedance 2.0, released in early 2026, went viral for producing 2K AI video with native audio and lip-synced dialogue, a jaw-dropping demonstration of how far this has come

- In healthcare, multimodal models are being used for autonomous diagnostics reading MRI scans, cross-referencing patient notes, and flagging anomalies, all at once

Real-World Applications You’ll See Everywhere

The impact isn’t just in labs or big tech companies. Multimodal AI is creeping into everyday use cases:

- Content Creation: Generate a thumbnail, write the caption, and produce the voiceover all from one prompt

- Education: Upload a handwritten equation or a chart; the AI explains it step by step

- Customer Support: AI that reads a product photo, listens to the complaint audio, and resolves the issue — no human needed

- Research: Feed a PDF, a dataset, and an audio interview; the model synthesizes insights across all three

What This Means for You

Whether you’re a creator, developer, or business owner — multimodal AI is going to fundamentally change how you build, communicate, and create. The era of single-mode AI is behind us. The next chapter is one where AI sees the world as richly and fully as we do.

The question isn’t whether multimodal AI will impact your field. It’s whether you’ll be ready when it does.

References:

Webuters. (2025, November 9). The evolution of multimodal generative AI in 2026. https://www.webuters.com/evolution-of-multimodal-generative-ai

Tran, K. (2025, December 26). Why 2026 belongs to multimodal AI. Fast Company. https://www.fastcompany.com/91466308/why-2026-belongs-to-multimodal-ai