Context Engineering — The Skill That’s Replacing Prompt Engineering in 2026

Remember when everyone was talking about “prompt engineering” as the hottest skill in AI? How you phrased your question determined everything?

That era is ending. In 2026, the real competitive edge isn’t about crafting a clever prompt — it’s about Context Engineering. And if you’re building anything with AI today, this is the concept that will define whether your system actually works or constantly disappoints.

So, What Exactly Is Context Engineering?

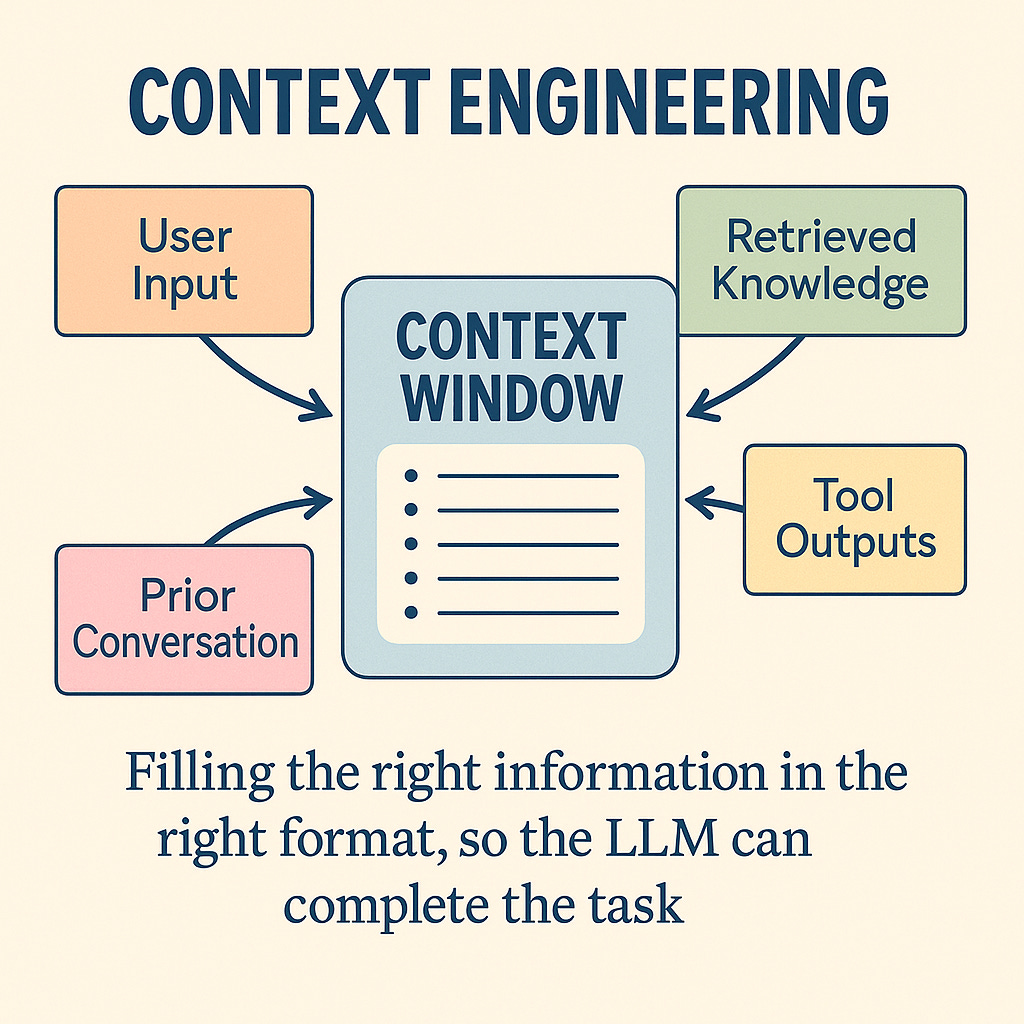

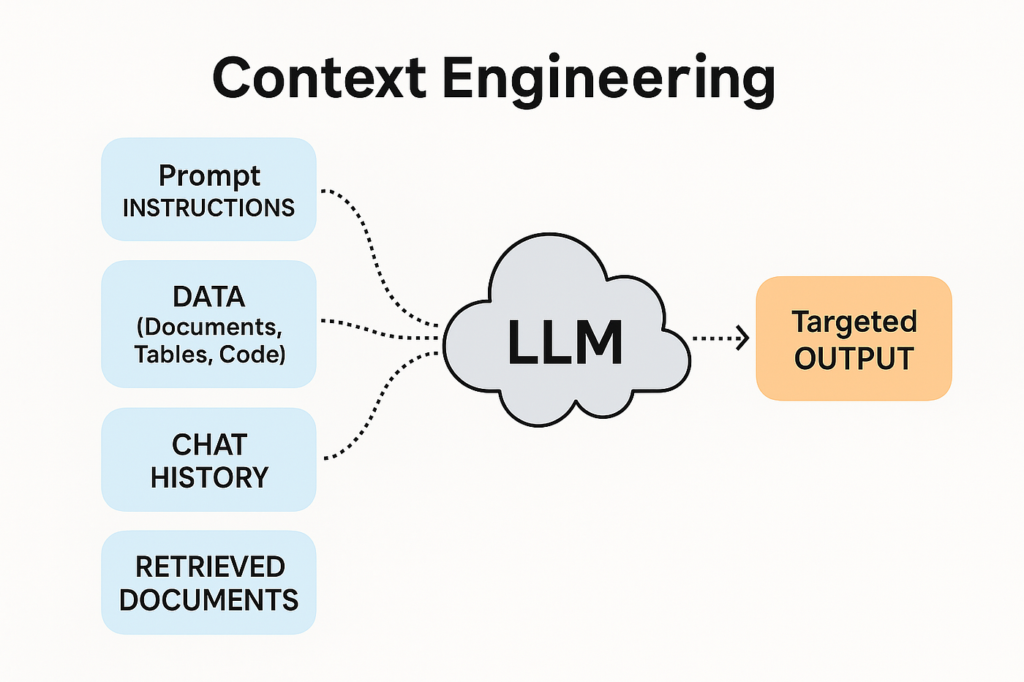

Prompt engineering was about how you asked the question. Context engineering is about what the AI sees before it even begins to answer.

Think of it this way: prompt engineering is like coaching an employee right before a meeting — last-minute instructions, hoping they go well. Context engineering is like giving that employee full access to the company’s entire knowledge base, past decisions, current data, and live tools — so they walk into every meeting already fully prepared.

In technical terms, context engineering means designing the entire information environment an AI model operates in — including memory, conversation history, retrieved documents, live API data, user profiles, and governance rules — all assembled dynamically before each query. Gartner made it official in July 2025, declaring “context engineering is in, and prompt engineering is out” as the defining shift for AI leaders.

Why Is It Exploding Right Now?

The momentum behind context engineering in 2026 is driven by one simple realization: AI is only as good as what it knows at the moment it responds.

- Hallucination reduction: Systems with structured retrieval and memory show significantly lower hallucination rates by grounding answers in real enterprise data rather than guessing

- Agentic AI needs it: As agentic AI grows, agents must carry institutional memory — definitions, workflows, past decisions — across long tasks. Context engineering provides that backbone

- Scalability: AI went from answering isolated questions to becoming a reliable system component — plugging into logging tools, live metrics, and escalation policies — only because of context engineering

- Enterprise adoption: Organizations in 2026 are investing in semantic layers, context graphs, and active metadata platforms to turn their institutional knowledge into machine-readable context any AI system can use

- Performance gains: In 2026, the biggest AI performance improvements come from dynamic context selection, compression, and memory management — not from cleverly worded prompts

Real-World Applications You’ll See Everywhere

Context engineering is quietly powering the most reliable AI deployments of 2026:

- Customer Support AI: Instead of a generic chatbot, a context-engineered system knows your account history, past complaints, current order status, and company policies — all before you finish typing

- Legal & Compliance: AI systems pull the latest regulations, company policies, and case history as live context — delivering advice grounded in current reality, not outdated training data

- Healthcare: Clinical AI assembles a patient’s full history, latest lab results, and treatment guidelines as context before making a recommendation — dramatically reducing errors

- Developer Tools: Coding assistants like Cursor don’t just autocomplete — they understand your entire codebase, architecture decisions, and coding standards as persistent context

- Research: AI agents pull live papers, datasets, and prior findings as context — synthesizing across sources rather than relying on what they were trained on months ago

What This Means for You

The organizations pulling ahead in 2026 are not the ones with the biggest AI budgets. They are the ones that have turned their institutional knowledge into machine-readable context that any AI system can use at any time.

If prompt engineering was about talking to AI better, context engineering is about building smarter environments for AI to operate in. The question to ask yourself is no longer “How do I phrase this better?” — it’s “What does my AI need to know, and how do I make sure it always has it?”

References:

Atlan. (2026, March 2). What is context engineering? Complete 2026 guide. https://atlan.com/know/what-is-context-engineering/

Sombra. (2026, January 22). The guide to AI context engineering in 2026. https://sombrainc.com/blog/ai-context-engineering-guide