AI Reasoning Models – The Revolution of “Think Before You Speak”

For years, AI has been praised for its speed. Ask a question, get an answer in milliseconds. But speed without accuracy is just a fast mistake.

In 2026, a new breed of AI is changing the game, not by being faster, but by being smarter. Meet AI Reasoning Models: the systems that actually think before they respond.

What Are Reasoning Models?

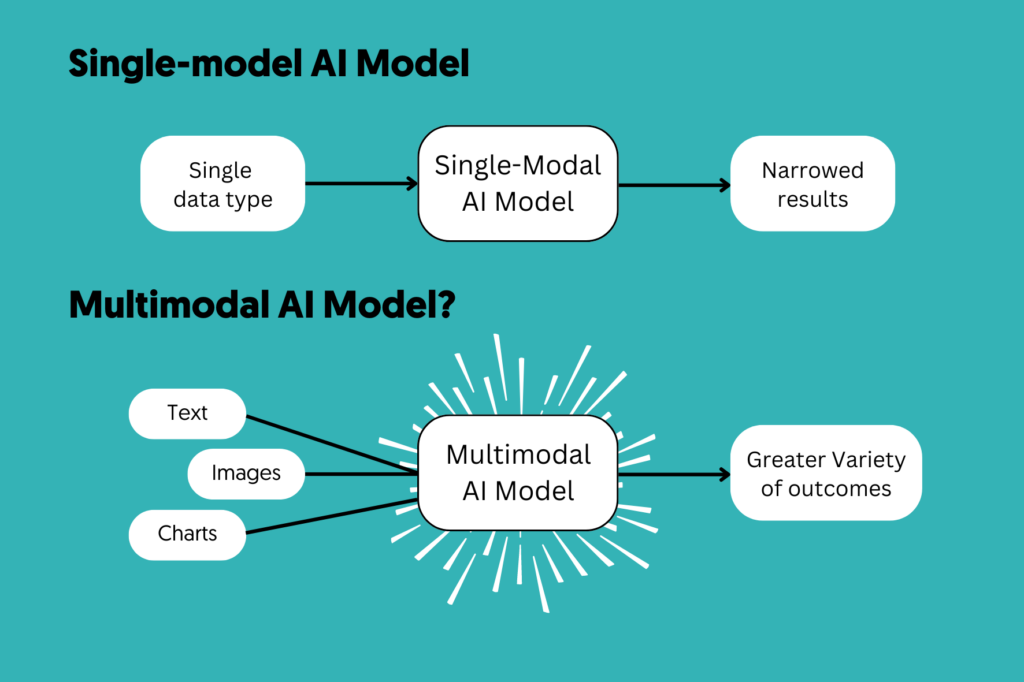

Most AI you’ve used works by predicting the next most likely word or token based on patterns in training data. It’s incredibly fast, but it struggles with complex, multi-step problems that require logical deduction.

Reasoning models are different. They use a technique called chain-of-thought reasoning, essentially an internal scratchpad where the model breaks a problem down step by step before giving you a final answer. The longer and harder it “thinks,” the better and more accurate its output becomes.

Think of it like the difference between a student who blurts out the first answer that comes to mind versus one who carefully works through the problem on paper first. Same raw knowledge — completely different quality of output.

The Numbers That Shocked the AI World

When OpenAI released o3, the AI community took notice — and for good reason:

- On ARC-AGI, a visual reasoning benchmark previously thought to be years away from AI capability, o3 scored 87.5% accuracy

- On AIME 2024 (elite-level math competition problems), o3 scored 96.7% — compared to o1’s 83.3%, a massive leap in just one generation

- These aren’t just benchmarks — they represent AI solving problems that genuinely require abstract thinking, planning, and reasoning

This is not incremental improvement. This is a paradigm shift.

From Autocomplete to Deep Thinking

Here’s the evolution in simple terms:

- GPT-3 era: Predict the next word really well

- GPT-4 era: Understand context, write coherently at length

- Reasoning model era: Analyze, deliberate, reason, and solve like a specialist consultant

The Feedback Loop Nobody’s Talking About

Here’s the part that makes reasoning models truly significant: they are now being used to train the next generation of AI models. The outputs of o3-level reasoning are becoming the training data for future systems creating an accelerating feedback loop of intelligence improvement.

This means every new model release won’t just be “a bit better.” It will compound on the reasoning capacity of its predecessor. We are, quite literally, building AI that gets exponentially smarter at thinking.

Why This Matters to You

Whether you’re a researcher, developer, student, or professional reasoning models are the tools that will handle your hardest, most intellectually demanding tasks. They’re not replacing creative or emotional intelligence. They’re taking the heavy cognitive lifting off your plate.

The shift from “fast AI” to “thinking AI” is already here. The real question is: are you using it?

References:

Bratincevic, N. (2025, March 27). OpenAI’s o3: Hype or a real step toward AGI? Forrester Research. https://www.forrester.com/blogs/openais-o3-hype-or-a-real-step-toward-agi/

Microsoft Azure AI Foundry. (2025, April 21). Everything you need to know about reasoning models. Microsoft Tech Community. https://techcommunity.microsoft.com/blog/azure-ai-foundry-blog/everything-you-need-to-know-about-reasoning-models-o1-o3-o4-mini-